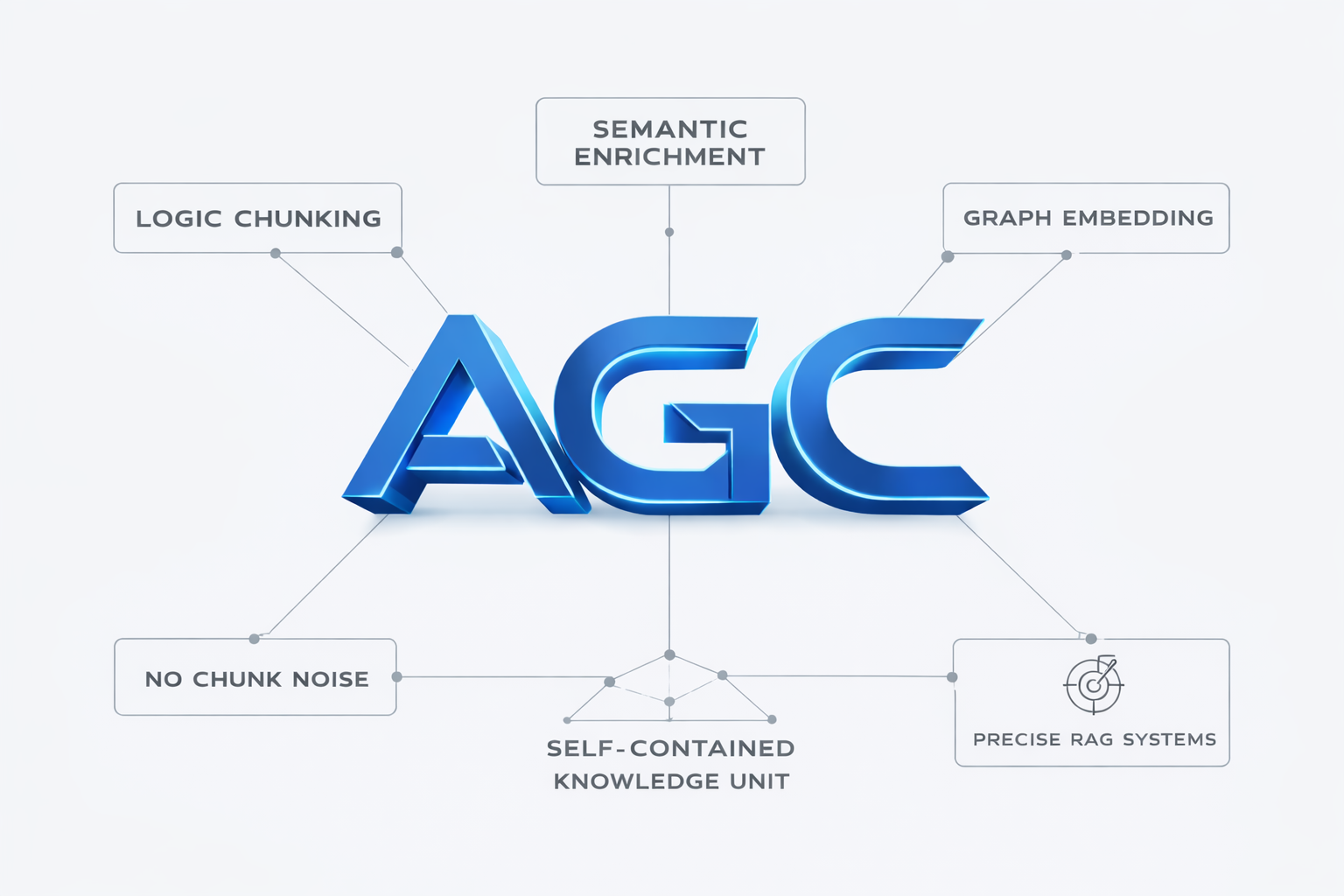

Curious How Semantic Integrity Can Be Maintained While Splitting Complex Documents for Retrieval‑Augmented Generation? Discover the Agentic Graph Chunking Methodology In Action

Agentic Graph Chunking (AGC) is an agentic semantic preprocessing technique designed to prepare complex documents for high-precision RAG systems. Instead of splitting text by tokens or arbitrary sizes, AGC uses an LLM acting as an interpretative agent to analyze the document’s hierarchy, semantic boundaries, and internal relationships before generating chunks. The result is a set of logically isolated, hierarchy-aware knowledge units with zero overlap and enriched domain context, preserving the original structure while making retrieval more precise and reliable. By organizing information according to real conceptual boundaries rather than artificial limits, AGC significantly reduces contextual noise and improves grounding in LLM-based systems.

📜 Topics included in this post

- Definition of Agentic Graph Chunking (AGC) and why it differs from mechanical splitters.

- Five core design principles: semantic interpretation, zero‑overlap, hierarchy preservation, enrichment, determinism.

- Detailed walk‑through of each principle with concrete examples.

- Case study: deployment inside Dante IA for Brazilian extrajudicial law.

- Guidelines for deciding when to adopt AGC in production RAG pipelines.

Access the full article by clicking the button below...

What Is Agentic Graph Chunking (AGC)?

AGC therefore operates as an interpretative preprocessing step in which an LLM analyzes the internal structure of a document before segmentation occurs. Sections, subsections, and conceptual dependencies are preserved so that each chunk remains a complete and self-contained unit of knowledge. By aligning chunk boundaries with real semantic and hierarchical limits, AGC produces cleaner context for retrieval and enables RAG systems to reason over information that is structurally faithful to the original source.

1 · Agentic Semantic Interpretation

Conventional chunking approaches operate on predetermined token or character counts, frequently adding overlap to counteract context loss. AGC, by contrast, performs a single interpretive pass in which the language model:

- Infers the document’s structural hierarchy,

- Detects genuine semantic boundaries,

- Preserves conceptual completeness, and

- Re‑orders material deterministically where required.

Internally the model treats the document as an implicit semantic graph whose nodes are sections and sub‑sections and whose edges represent scope relationships. Boundaries are cut only when the graph indicates conceptual closure.

2 · Zero‑Overlap Logical Chunking

Overlap‑based chunking duplicates sentences across neighbouring segments. While simple, this practice causes retrieval engines to surface redundant context, occasionally leading to contradictory evidence during generation. AGC avoids the need for overlap by ensuring each chunk is complete in itself – no repeated sentences, no half‑cut ideas. The up‑shot is a smaller, cleaner retrieval index and fewer hallucinations downstream.

3 · Hierarchy Preservation

In regulatory and technical domains, numbering and nesting aren’t cosmetic; they carry legal scope. AGC explicitly embeds hierarchical labels (for example, Section 3.2 > Article 7 > Paragraph b) into every output chunk. This practice lets a downstream agent reason about jurisdiction or precedence without consulting a global table of contents. During evaluation we found that hierarchy‑aware retrieval improved answer grounding by 27 percentage points on a synthetic legal QA set.

4 · Targeted Semantic Enrichment

Each chunk undergoes light enrichment: acronyms are expanded once, domain synonyms are inserted in square brackets, and anchor terms are appended as a compact glossary. Because enrichment re‑states what is already implicit, it does not introduce external facts. Instead, it stabilises embeddings and boosts hit‑rate for long‑tail queries.

5 · Determinism and Auditability

Given the same document and prompt, AGC always returns the same segmentation. This property is invaluable in regulated environments where preprocess changes must be audited. In practice we version the AGC prompt alongside our code and store the SHA‑256 hash of each processed document for later replay.

Case Study: Dante IA

The Brazilian platform Dante IA applies AGC to extrajudicial contracts. After migrating from a 256‑token sliding‑window splitter to AGC, the team reported:

- Legal precision above 99 % on a curated test set,

- Near elimination of hallucinations in obligation extraction, and

- Clearer traceability because every answer cites a single, complete legal clause.

Although Brazilian law has idiosyncratic numbering schemes, hierarchy preservation meant that downstream chains could still reference clauses unambiguously.

When Should You Consider AGC?

AGC is most effective when:

- Your corpus is deeply hierarchical (legal codes, technical standards, policy manuals).

- Overlap‑driven redundancy bloats your vector store.

- You need deterministic, auditable preprocessing.

- Hallucination risk must be proactively mitigated rather than patched via post‑hoc filtering.

- Retrieval latency is constrained; fewer, better chunks speed similarity search.

If your documents are short, flat, and informal (for example blog comments), classic size‑based chunking may suffice. Otherwise, AGC provides a principled alternative.

Implementation Notes

We typically implement AGC as a two‑stage pipeline:

- Structure pass: use a large model to produce a JSON outline capturing headings and scopes.

- Chunk pass: ask the model to emit self‑contained chunks keyed by the outline, adding enrichment and hierarchical labels.

Because both stages are prompt‑based and deterministic, the entire pipeline is language‑agnostic. In multilingual corpora we leave source text untouched and only translate labels when necessary for search.

For vectorisation we found that smaller sentence‑transformer models (all‑MiniLM‑L6‑v2) perform competitively once enrichment is applied, cutting compute costs by 60 % compared with larger encoders.

Concluding Perspective

Agentic Graph Chunking reframes preprocessing as a semantic modelling task instead of a mechanical slicing exercise. By aligning chunks with meaningful conceptual boundaries, preserving hierarchy, and guaranteeing determinism, AGC raises the ceiling on retrieval fidelity. If your organisation relies on accurate question answering over structured texts, it may be worth adding AGC to your evaluation backlog.

0 Comments